Prerequisites¶

Complete the shared setup first:

- Follow Prepare Lambda Environment through:

- Creating the

threat-intel-lambda-layerLambda layer (Python 3.12 dependencies). - Creating the

projectx-lambda-feed-exec-roleIAM role (base policies). - Creating

projectx-lambda-feed-SGif you will use VPC for other functions later.

You will also need:

- Public threat intelligence feed parser completed so

public-threat-intelligence-feed-parseris writing JSON objects to your Amazon S3 bucket (for examplethreat-intelligence-feed-log-bucket). - Prepare web server PostgreSQL for Lambda ingest completed (or equivalent): PostgreSQL on

projectx-prod-websvr-publicaccepts 5432 fromprojectx-lambda-feed-SGwithlisten_addressesandpg_hba.confaligned to your VPC. projectx-prod-vpcwith private web subnet(s) where this function will run, route tables you can associate with an S3 gateway VPC endpoint, andprojectx-prod-websvr-public(or your database host) reachable on TCP 5432 from the Lambda security group.- PostgreSQL

dashboard.panel_feedtable already created (as in the prepare guide).

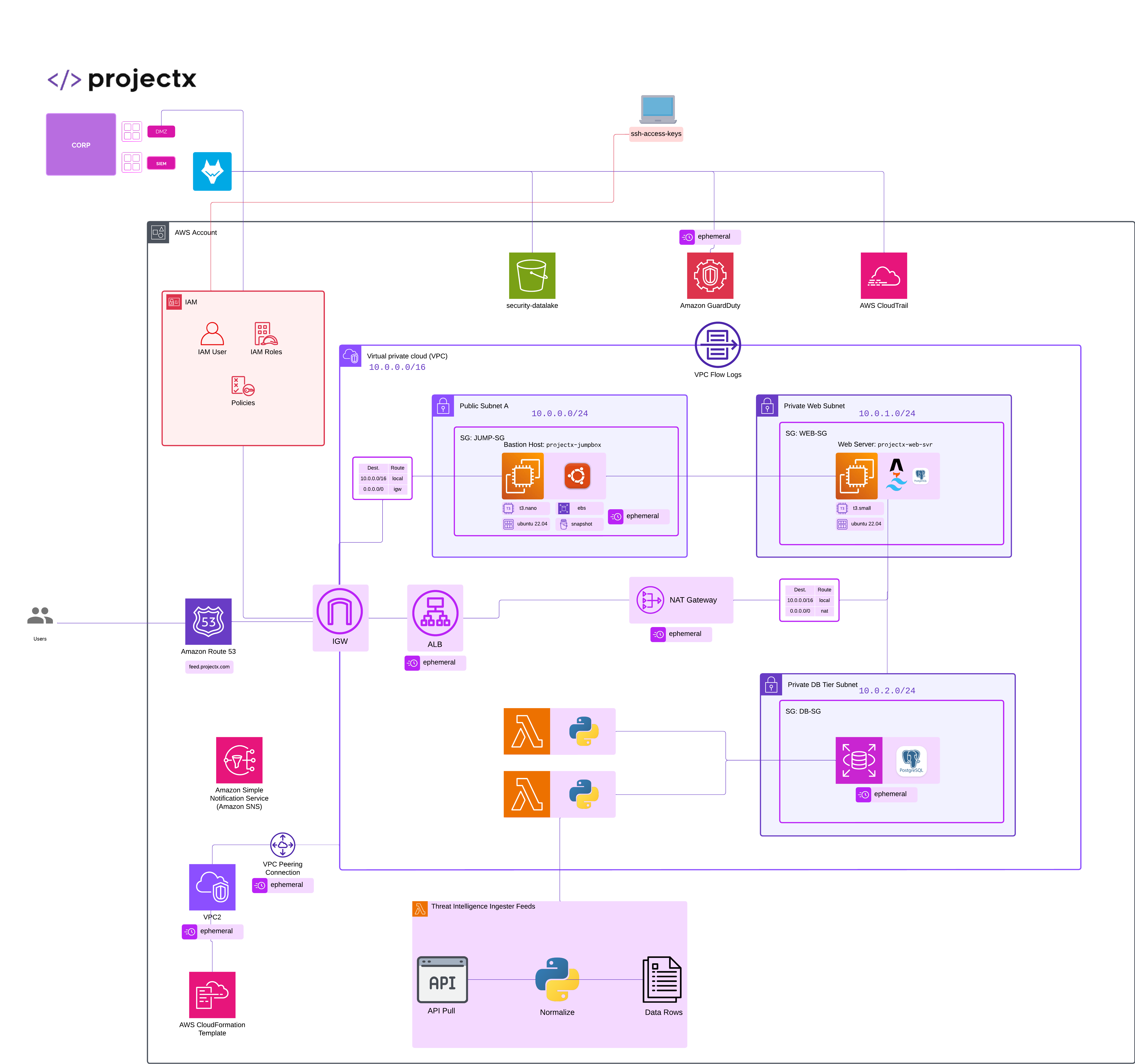

Network topology¶

Overview¶

This guide creates one AWS Lambda function:

| Setting | Value |

|---|---|

| Function name | private-db-threat-intelligence-feed-pull |

| Runtime | Python 3.12 |

| Architecture | x86_64 |

| Handler | lambda_function.lambda_handler |

What it does

- Runs when Amazon S3 creates new

.jsonobjects in your feed bucket (the same bucketpublic-threat-intelligence-feed-parserwrites to). - For each S3 event record, calls

GetObject, parses the JSON document, and inserts a row intodashboard.panel_feedviapsycopg2(seedb.pyin the exercise code).

Important: This function runs inside the VPC on your private web subnet(s) so it can reach PostgreSQL on a private address. From a subnet without a NAT path to the internet, S3 must be reachable via an S3 gateway VPC endpoint (or another supported private path). Without that, GetObject can hang until the Lambda times out even though IAM is correct.

Exercise code

Download the Python sources (and any packaged .zip provided there) from the exercise repository:

private-db-threat-intelligence-feed-pull on GitHub

Use those files as the deployment package for this function (see Step 5).

Step 1: IAM permissions for S3¶

The role from Prepare Lambda Environment includes logging and (optionally) Secrets Manager / VPC networking. private-db-threat-intelligence-feed-pull also needs permission to read objects from your feed bucket.

On projectx-lambda-feed-exec-role (or a dedicated role if you prefer), attach an inline policy (or add a statement to an existing policy) that allows GetObject on your bucket:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "ReadThreatIntelFeedObjects",

"Effect": "Allow",

"Action": [

"s3:GetObject"

],

"Resource": "arn:aws:s3:::YOUR_FEED_BUCKET_NAME/*"

}

]

}

Replace YOUR_FEED_BUCKET_NAME with your bucket name (for example threat-intelligence-feed-log-bucket).

If the bucket uses SSE-KMS, add kms:Decrypt and kms:GenerateDataKey (or equivalent) for that CMK on the same role.

When you add the S3 event notification or Lambda trigger, the console usually prompts to allow S3 to invoke this function; accept it or add lambda:InvokeFunction for s3.amazonaws.com on the function resource policy.

Step 2: Create the Lambda function¶

- Open the Lambda console → Create function.

- Choose Author from scratch.

- Configure:

- Function name:

private-db-threat-intelligence-feed-pull - Runtime: Python 3.12

- Architecture: x86_64

- Execution role: Use an existing role →

projectx-lambda-feed-exec-role - Choose Create function.

Step 3: General configuration¶

Under Configuration → General configuration → Edit:

- Description (suggested):

S3-triggered ingest of threat intel JSON into dashboard.panel_feed (VPC). - Timeout: at least 1 minute (300 seconds is reasonable if you process large batches or slow database responses).

- Memory: 256 MB or higher if you see throttling or timeouts.

Save.

Step 4: Environment variables¶

Under Configuration → Environment variables → Edit, set at least:

| Variable | Required | Description |

|---|---|---|

EC2_ENDPOINT |

Yes | PostgreSQL host reachable from the private web subnet (typically the private IP of projectx-prod-websvr-public, e.g. 10.0.x.x). |

EC2_DB_NAME |

No | Database name (default in code: projectxdb). |

EC2_USERNAME |

Yes | Database user (e.g. webapp_rw or projectx_dbadmin per your lab). |

EC2_PASSWORD |

Yes | User password (prefer Secrets Manager or Parameter Store in production). |

EC2_PORT |

No | Default 5432. |

EC2_SSLMODE |

No | Default require; use disable only if your lab explicitly uses non-TLS local access. |

Example:

EC2_ENDPOINT=10.0.0.240

EC2_DB_NAME=projectxdb

EC2_USERNAME=webapp_rw

EC2_PASSWORD=your-secret

EC2_PORT=5432

EC2_SSLMODE=require

Save.

Step 5: Deployment package (.zip)¶

The handler entry point is lambda_function.lambda_handler: file lambda_function.py at the root of the zip (not inside a subfolder), plus db.py at the root, containing a function named lambda_handler.

Deployment package contents

Do not upload env, passwords, or other secrets in the zip. Only the Python modules belong in the deployment package.

- Download the Python files from private-db-threat-intelligence-feed-pull on GitHub (for example

lambda_function.pyanddb.py). - The repository may include

private-lambda-deployment.zipin the parentthreat_intelligence_lambda_functionsdirectory with those modules at the zip root; you can upload that file directly if present. - To build locally (Unix-style shell), from the directory that contains

lambda_function.pyanddb.py:

- In Lambda Code → Upload from → .zip file, upload the zip.

- Confirm Runtime settings → Handler is

lambda_function.lambda_handler.

Step 6: Attach the Lambda layer¶

Under Code → Layers → Add a layer:

- Specify an ARN → paste the Layer version ARN for

threat-intel-lambda-layer(created in Prepare Lambda Environment). - Compatible runtime must include Python 3.12.

The layer should include psycopg2 (and other shared dependencies) so imports succeed; boto3 is also available in the Lambda runtime.

Step 7: VPC (private web subnet)¶

Attach this function to your private web subnet(s) so it can reach PostgreSQL on a private IP.

Under Configuration → VPC → Edit:

- VPC:

projectx-prod-vpc - Subnets: Your private web subnet(s) (use the subnet IDs your lab labels “private web,” unless your design intentionally uses others).

- Security groups:

projectx-lambda-feed-SG(or a group that allows outbound TCP 5432 to the database instance security group).

Save and wait for ENI configuration to finish.

On the database host security group, allow inbound PostgreSQL (5432) from projectx-lambda-feed-SG (source type Security group) where possible, rather than only a broad CIDR.

If your organization routes all AWS API traffic privately, ensure S3 is reachable from these subnets (for example an S3 gateway endpoint and route tables that send the S3 prefix list to that endpoint). Misconfigured VPC networking usually shows up as timeouts when calling GetObject.

Step 8: S3 event notifications¶

Configure the same bucket public-threat-intelligence-feed-parser uses so that new .json objects invoke private-db-threat-intelligence-feed-pull.

Option A — S3 bucket console

- Open S3 → your feed bucket → Properties → Event notifications → Create event notification.

- Name: e.g.

threat-intel-feed-ingest - Prefix (optional): match Lambda 1’s key prefix if you use one (for example

threat-intel/); leave empty for the whole bucket. - Suffix:

.json - Event types:

s3:ObjectCreated:*(or Put / Post / CompleteMultipartUpload as needed). - Destination: Lambda function →

private-db-threat-intelligence-feed-pull - Confirm the prompt so S3 may invoke the function.

Option B — Lambda console

- Open

private-db-threat-intelligence-feed-pull→ Add trigger → S3. - Select the bucket, object created event type, optional prefix/suffix, enable the trigger, and save.

Do not configure this function to write objects that match the same prefix/suffix pattern, or you risk a recursive invoke loop.

Step 9: Test event¶

Under Test → Create new event, use a JSON body shaped like an S3 notification (adjust bucket, key, and Region):

{

"Records": [

{

"eventSource": "aws:s3",

"awsRegion": "us-east-2",

"s3": {

"bucket": { "name": "threat-intelligence-feed-log-bucket" },

"object": { "key": "2026-03-31/security_news_rss.json" }

}

}

]

}

The key must match a real object in the bucket (upload one with public-threat-intelligence-feed-parser or manually). If the key contains special characters, use the URL-encoded form S3 sends in production events (the code decodes + and % sequences).

Run Test. A successful invocation returns HTTP 200 (or 207 if some records in a batch fail) in the function result, dashboard.panel_feed receives a new row, and CloudWatch shows GetObject followed by a successful database connection.

Check CloudWatch Logs for the log group /aws/lambda/private-db-threat-intelligence-feed-pull if anything fails.

Step 10: Verification checklist¶

- [ ] Function name

private-db-threat-intelligence-feed-pull, handlerlambda_function.lambda_handler - [ ] Python 3.12, x86_64

- [ ]

EC2_*environment variables set to reach PostgreSQL from the VPC - [ ] IAM allows

s3:GetObjectonarn:aws:s3:::YOUR_BUCKET/*and S3 can invoke the function - [ ] VPC: private web subnet(s) and

projectx-lambda-feed-SG; database allows 5432 from Lambda - [ ] S3 gateway endpoint and routes (or other private path) so

GetObjectdoes not time out - [ ] Layer

threat-intel-lambda-layerattached - [ ] S3 event notification or trigger for

.jsonobject creates - [ ] Test event with a real S3 object key succeeds

Next steps¶

- Add Amazon EventBridge rules or alarms if you want visibility when ingest fails or latency grows.

- Harden Secrets Manager usage for

EC2_PASSWORDand narrow S3 prefix/suffix so only Lambda 1 output keys invoke this function.