Prerequisites¶

projectx-prod-vpchas been created with subnets configured.projectx-prod-jumpboxEC2 instance exists and is accessible.- AWS CLI configured with appropriate credentials.

- Your AWS username for the bucket naming convention.

project-x-sec-boxconfigured with Wazuh.- S3 security datalake bucket has been created with the

vpc-flow/folder structure. - S3 bucket policy configured to allow VPC Flow Logs service to write logs.

Note

This guide is written for Wazuh version 4.9.2. UI elements and navigation paths may differ in other versions.

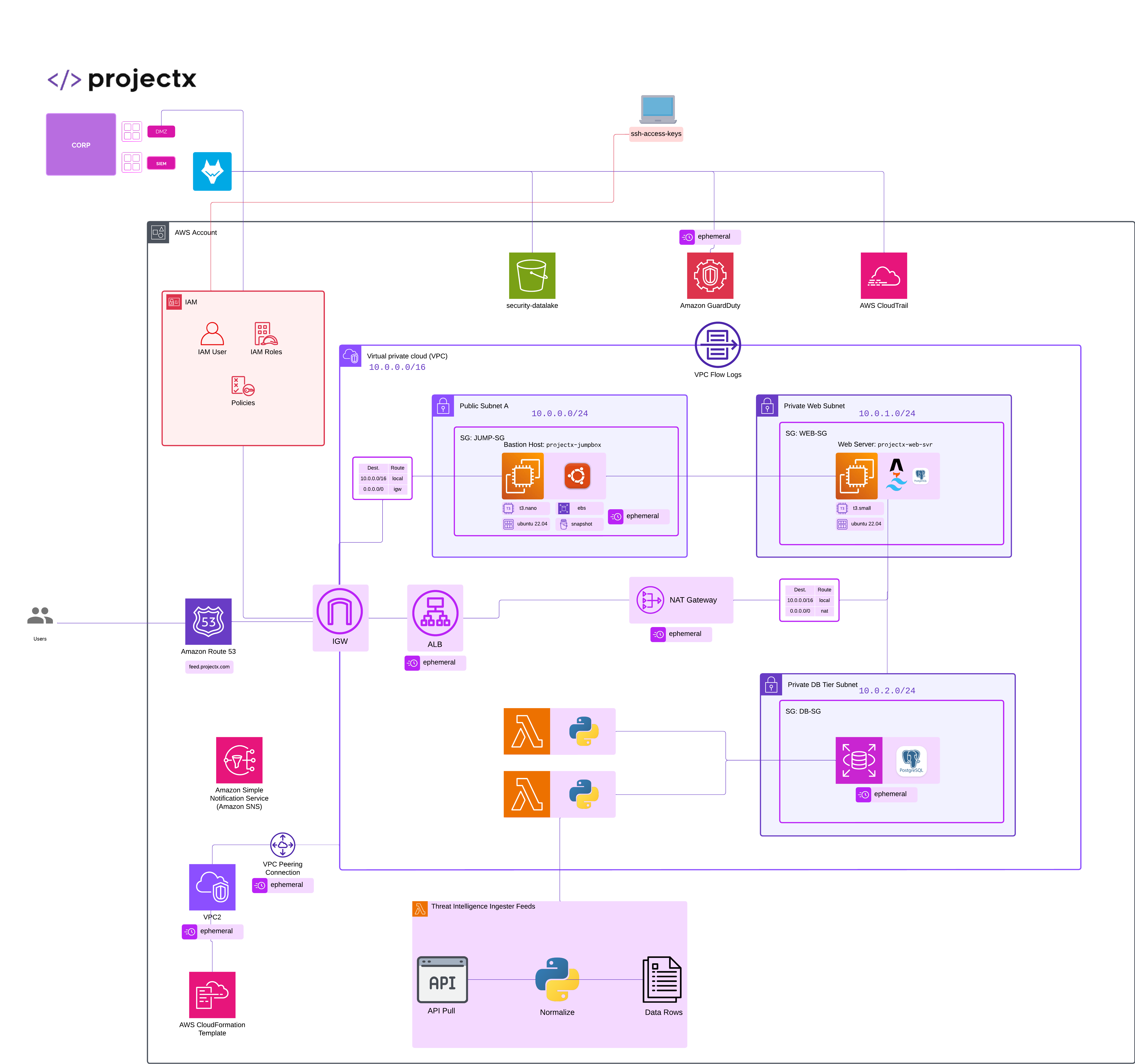

Network Topology¶

Resources¶

- https://documentation.wazuh.com/current/cloud-security/amazon/services/supported-services/vpc.html

Overview¶

What are VPC Flow Logs?¶

VPC Flow Logs is a feature that enables you to capture information about the IP traffic going to and from network interfaces in your VPC. Flow log data can be published to Amazon CloudWatch Logs or Amazon S3.

VPC Flow Logs capture network flow information including:

- Source and Destination: IP addresses and ports for traffic flows

- Protocol: The IP protocol number (TCP, UDP, ICMP, etc.)

- Traffic Direction: Whether traffic is accepted or rejected

- Packet and Byte Counts: Volume of traffic

- Action: Whether the traffic was allowed or denied by security groups or network ACLs

👉 VPC Flow Logs are essential for network security monitoring, troubleshooting connectivity issues, and detecting anomalous network behavior.

VPC Flow Logs can be enabled at different levels:

- VPC Level: Captures all traffic for all network interfaces in the VPC

- Subnet Level: Captures all traffic for all network interfaces in the subnet

- Network Interface Level: Captures traffic for a specific network interface

For this guide, we'll create VPC Flow Logs at the VPC level to capture all network traffic in your projectx-prod-vpc and deliver those logs to your S3 security datalake.

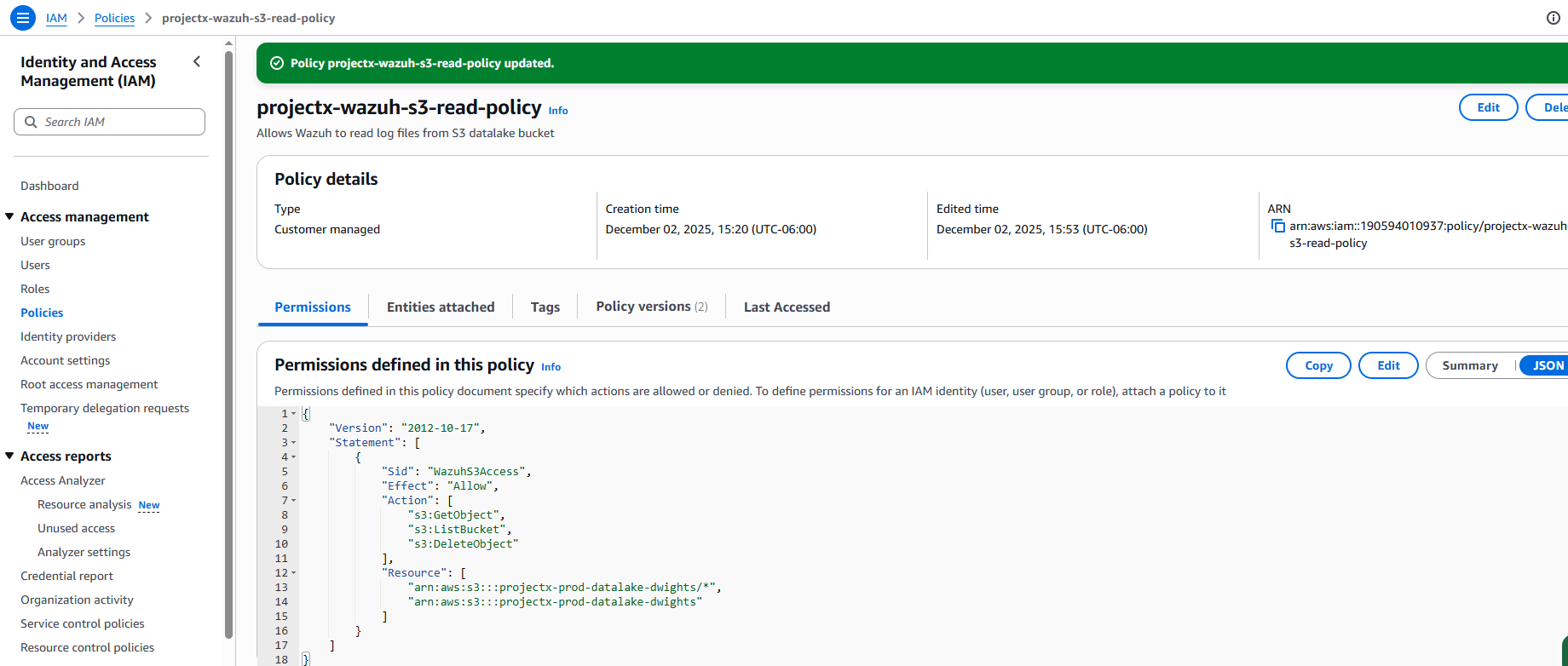

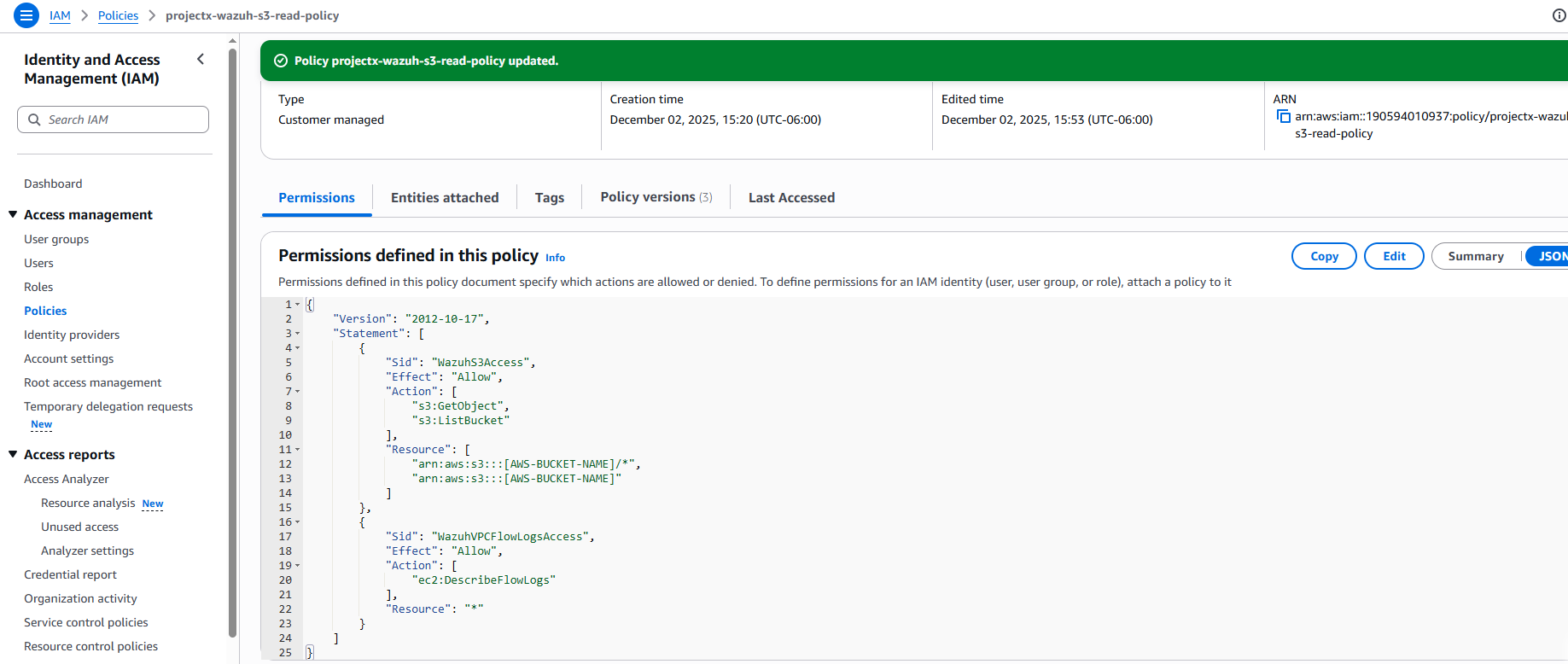

Update IAM Policy for VPC Flow Logs¶

To enable Wazuh to query and describe VPC Flow Logs, we need to add the ec2:DescribeFlowLogs permission to the existing projectx-wazuh-s3-read-policy that was created for the projectx-wazuh-s3-user.

Navigate to IAM Policy¶

Open the IAM service in the AWS Console.

In the left navigation pane, select Policies.

Search for projectx-wazuh-s3-read-policy.

Click on the policy name.

Edit Policy¶

Click Edit.

Select the JSON tab.

You should see the existing policy structure. Add a new statement to include EC2 permissions for describing flow logs:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "WazuhS3Access",

"Effect": "Allow",

"Action": [

"s3:GetObject",

"s3:ListBucket"

],

"Resource": [

"arn:aws:s3:::[AWS-BUCKET-NAME]/*",

"arn:aws:s3:::[AWS-BUCKET-NAME]"

]

},

{

"Sid": "WazuhVPCFlowLogsAccess",

"Effect": "Allow",

"Action": [

"ec2:DescribeFlowLogs"

],

"Resource": "*"

}

]

}

👉 Replace [AWS-BUCKET-NAME] with your actual S3 bucket name if it's still a placeholder.

👉 The ec2:DescribeFlowLogs permission allows Wazuh to query VPC Flow Logs metadata, which helps with log processing and correlation.

Click Next.

Review the policy changes.

Click Save changes.

Verify Policy Update¶

Navigate back to Policies.

Select projectx-wazuh-s3-read-policy.

Go to the Permissions tab.

You should see both statements:

- WazuhS3Access - S3 read permissions

- WazuhVPCFlowLogsAccess - EC2 DescribeFlowLogs permission

👉 The policy is now updated and will automatically apply to the projectx-wazuh-s3-user since it's attached to that user.

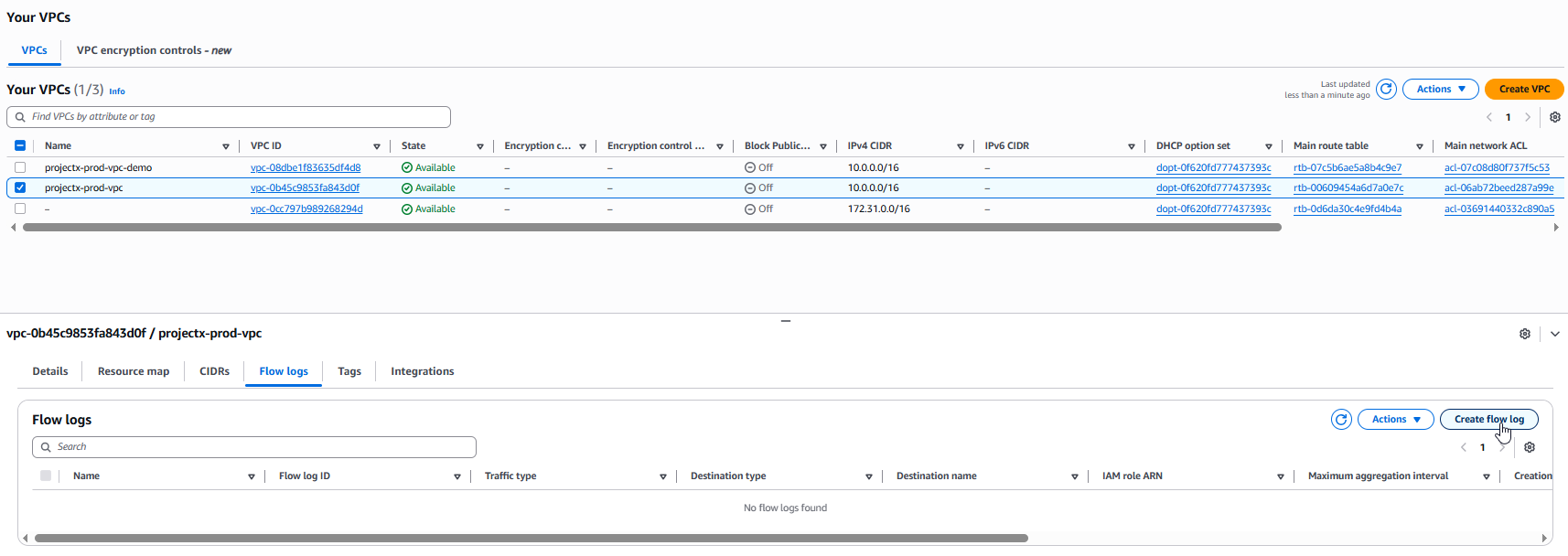

Create VPC Flow Log¶

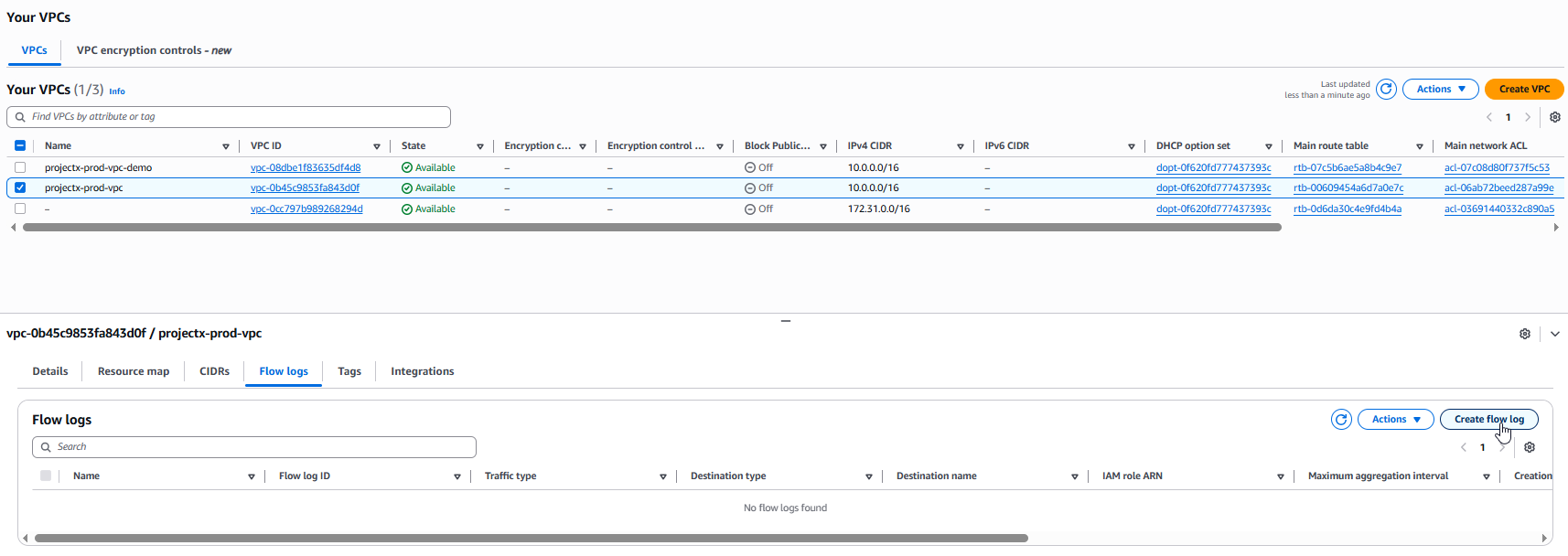

Navigate to VPC¶

Open the VPC service in the AWS Console.

In the left navigation pane, select Your VPCs.

Select your VPC: projectx-prod-vpc

Click the Flow logs tab.

Click Create flow log.

Configure Flow Log Details¶

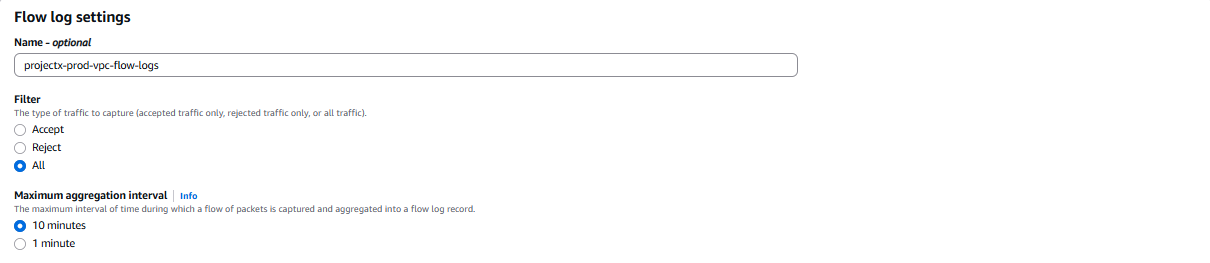

Name and Filter¶

-

Flow log name:

projectx-prod-vpc-flow-logs -

Filter: Select All

-

👉 This captures all traffic (accepted and rejected). You can also choose Accept or Reject to filter specific traffic types.

-

Maximum aggregation interval: Select 10 minutes

-

👉 This determines how often log files are delivered. 1 minute provides near real-time visibility.

Destination Type¶

-

Destination type: Select Send to an S3 bucket

-

S3 bucket ARN: Enter

arn:aws:s3:::projectx-prod-datalake-[username]/vpc-flow/ -

👉 Replace

[username]with your actual AWS username -

👉 The

/vpc-flow/suffix ensures logs are organized in a dedicated folder -

Log record format: Select Default

-

👉 The default format includes all available fields. You can customize this if needed.

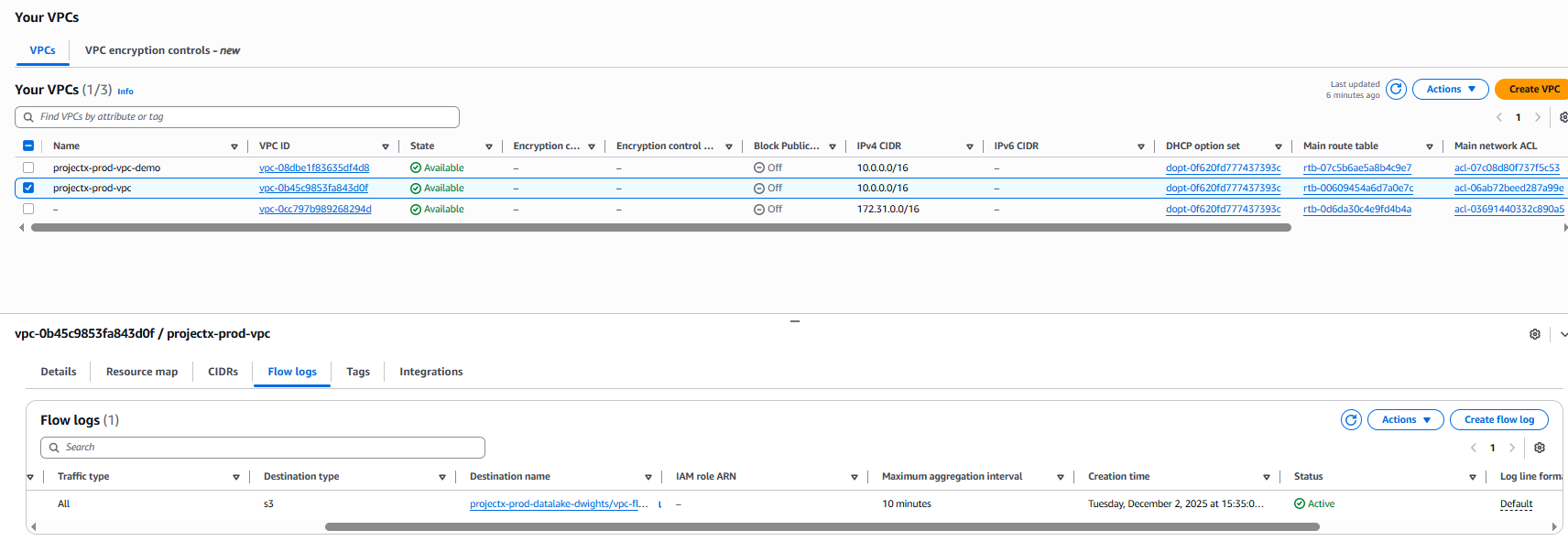

Verify Flow Log Status¶

After creation, you should see your flow log listed with a status of Active.

👉 It may take a few minutes for VPC Flow Logs to start delivering log files to your S3 bucket. You can generate VPC flow logs by sshing into your jubmpx. Log files are typically delivered within 1-5 minutes of network activity.

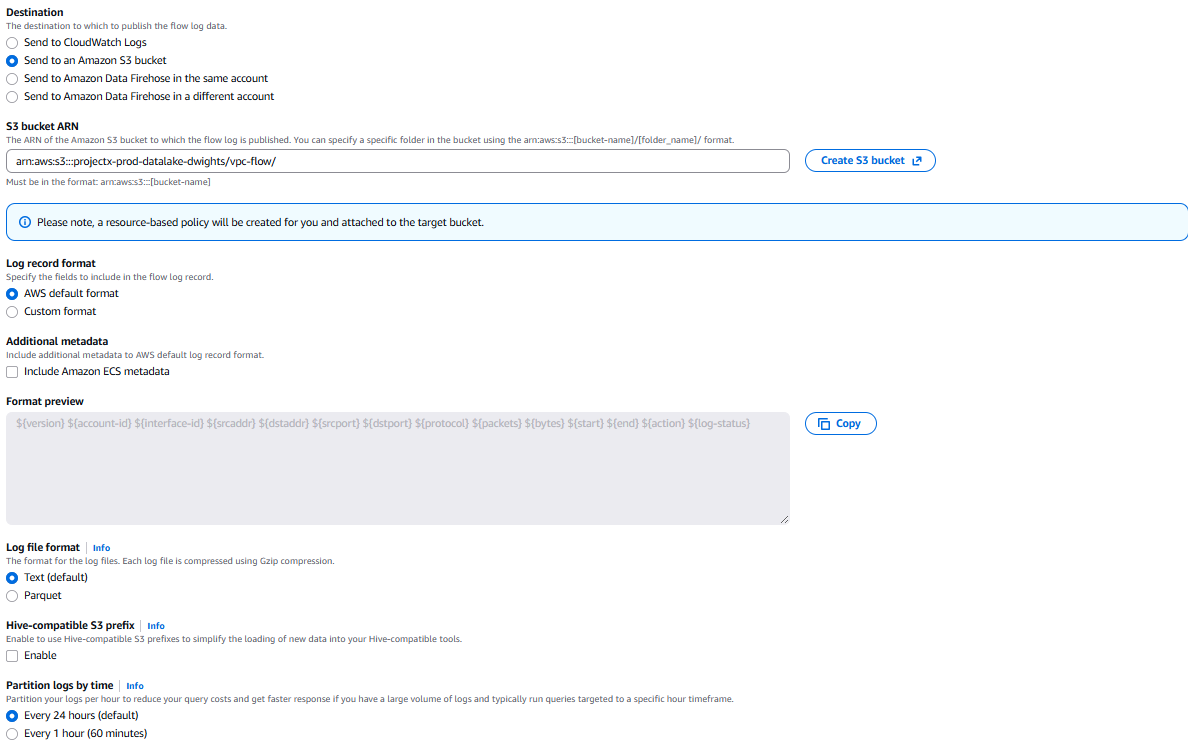

Configure S3 Lifecycle Policy for VPC Flow Logs¶

To automatically delete VPC Flow Logs after 14 days, we'll create a lifecycle policy that applies specifically to the vpc/ prefix in your S3 bucket.

Navigate to S3 Lifecycle Rules¶

Open the S3 service in the AWS Console.

Select your bucket: projectx-prod-datalake-[username]

Go to the Management tab.

Scroll down to Lifecycle rules.

Click Create lifecycle rule.

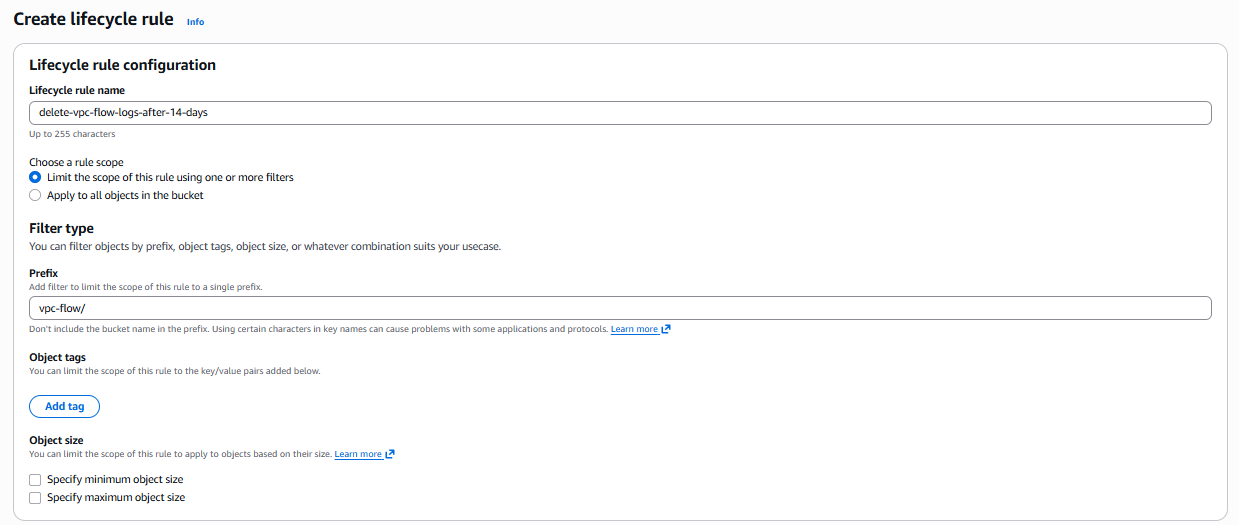

Configure Lifecycle Rule¶

Basic Configuration¶

-

Lifecycle rule name:

delete-vpc-flow-logs-after-14-days -

Rule scope: Select Limit the scope of this rule using one or more filters

-

Prefix:

vpc-flow/- 👉 This ensures the rule only applies to VPC Flow Logs, not other data in the bucket

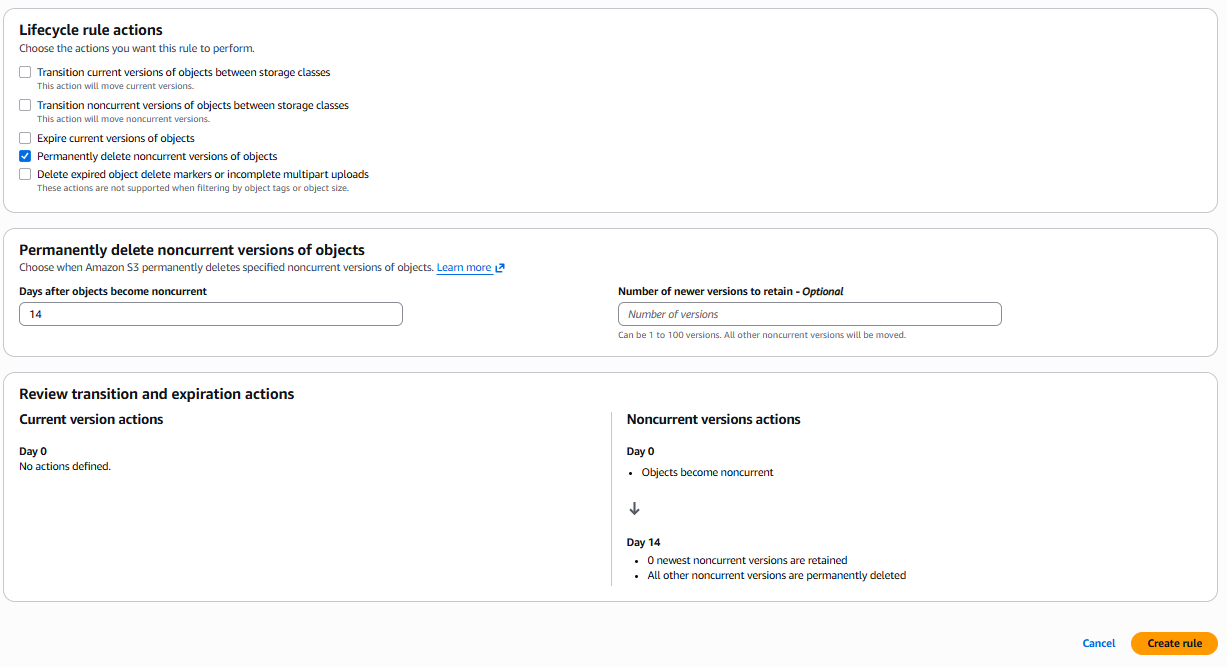

Lifecycle Rule Actions¶

Select Expire current versions of objects.

- Days after object creation:

14

👉 This will automatically delete VPC Flow Log files 14 days after they are created, helping manage storage costs and comply with data retention policies.

👉 Note: "Expire current versions of objects" permanently deletes the current versions of objects after the specified number of days.

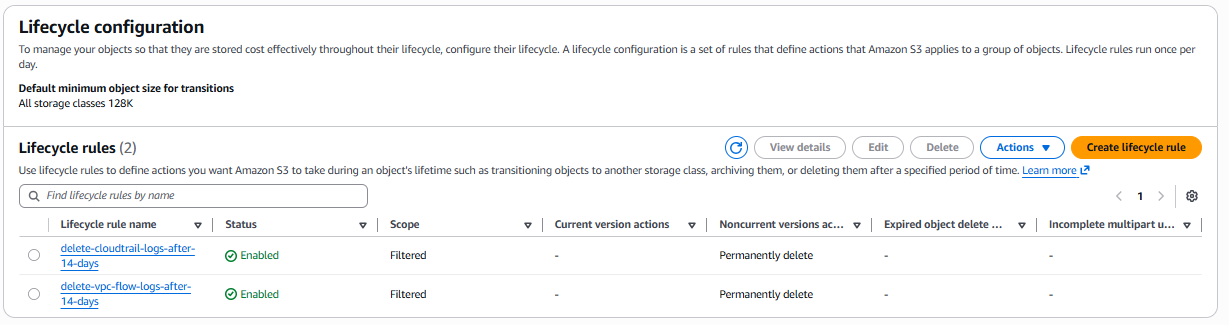

Verify Lifecycle Rule¶

Navigate back to Management ➔ Lifecycle rules.

You should see your rule delete-vpc-flow-logs-after-14-days listed.

👉 The lifecycle rule will automatically apply to all objects in the vpc/ prefix. Objects will be deleted 14 days after creation.

Verify VPC Flow Log Delivery¶

Check S3 Bucket¶

Navigate to your S3 bucket: projectx-prod-datalake-[username]

Click on the vpc-flow/ folder.

You should see VPC Flow Log files being delivered. Log files are typically organized by:

AWSLogs/[account-id]/vpcflowlogs/[region]/[year]/[month]/[day]/

👉 If you don't see log files immediately, wait a few minutes and generate some network traffic (e.g., SSH into your jumpbox, access web servers) to create network flows that will be logged.

Verify Flow Log Activity¶

Navigate back to VPC ➔ Your VPCs.

Select projectx-prod-vpc.

Click the Flow logs tab.

You should see your flow log with status Active.

👉 Flow logs capture network traffic in real-time. Any network activity in your VPC will be logged and delivered to S3.

Wazuh Integration¶

Once VPC Flow Logs are being delivered to your S3 bucket, you can configure Wazuh to ingest and analyze these logs.

Configure Wazuh S3 Integration¶

Power on [project-x-sec-box] VM.

Navigate to Server management ➔ Settings.

Navigate to the <wodle name="syscollector"> block.

Add the following <wodle> section below the <cloudtrail> block or after your CloudTrail configuration:

<wodle name="aws-s3">

<disabled>no</disabled>

<interval>10m</interval>

<run_on_start>yes</run_on_start>

<skip_on_error>yes</skip_on_error>

<bucket type="vpcflow">

<name>projectx-prod-datalake-[username]</name>

<path>vpc-flow/</path>

<aws_profile>default</aws_profile>

</bucket>

</wodle>

👉 Replace [username] with your actual AWS username (e.g., projectx-prod-datalake-johnsmith).

👉 The aws_profile should match the AWS credentials profile configured on the Wazuh server. If using the IAM user created earlier, ensure the AWS credentials are configured in ~/.aws/credentials.

Select Save.

Then Restart Manager to apply changes.

Verify Wazuh Integration¶

Check the Wazuh logs to verify VPC Flow Logs are being ingested:

You should see messages indicating VPC Flow Logs are being processed from S3.

👉 Look for entries like "Processing VPC Flow Logs" or "Fetched X events from S3 bucket".

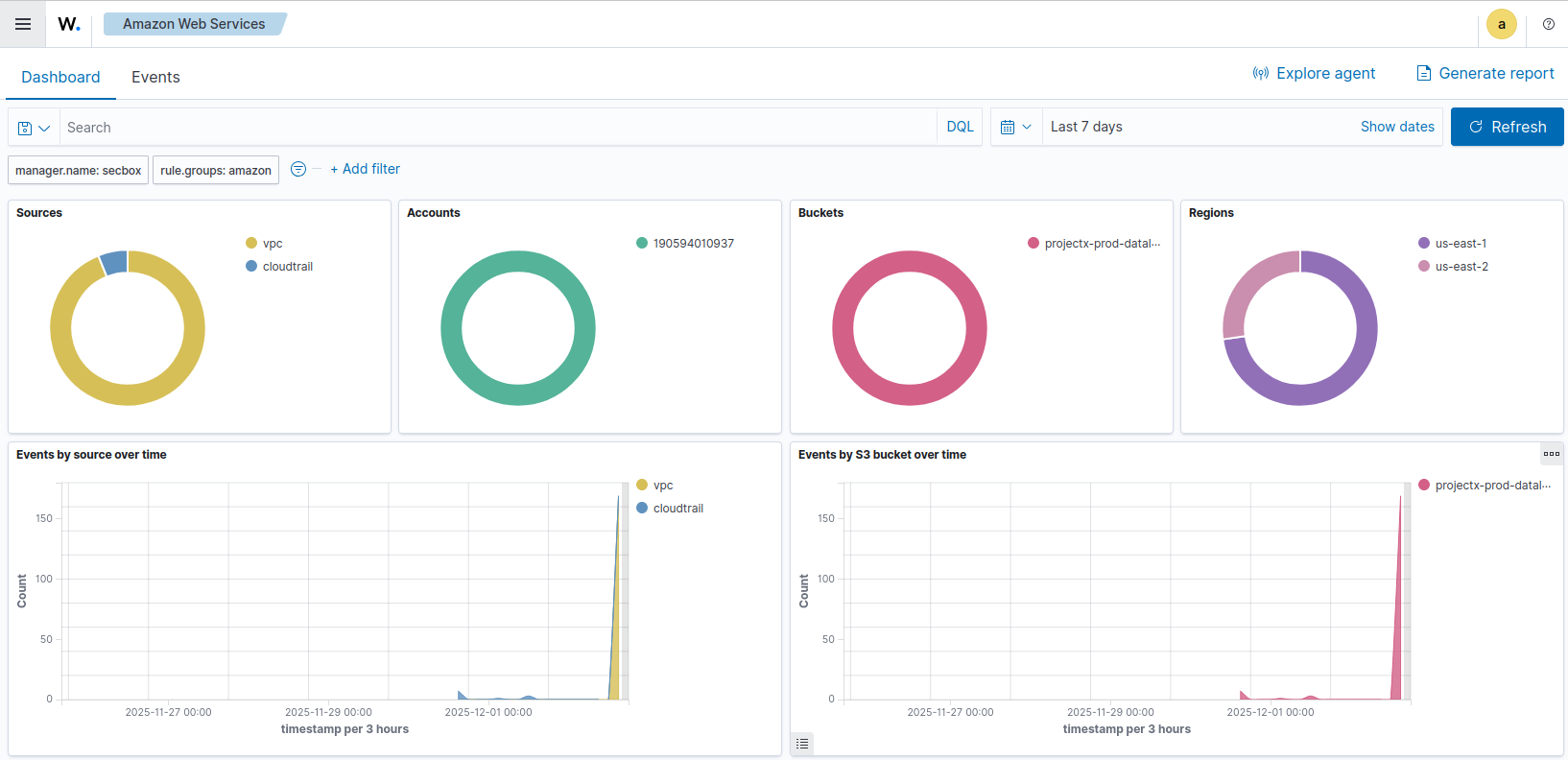

View VPC Flow Log Events in Wazuh¶

Navigate to the Wazuh Dashboard.

Go to Cloud security ➔ Amazon Web Services.

You should start to see VPC Flow Log data being collected.

Adjust the time range if needed.

You can also go to Explore ➔ Discover.

Select the index pattern that includes VPC Flow Log events (typically wazuh-alerts-*).

Search for VPC Flow Log-related events using filters like:

data.aws.vpcEndpointId

Summary¶

Success!

Your VPC network traffic is now being logged, stored in S3, and analyzed by Wazuh for security monitoring and network analysis purposes. The automatic deletion policy ensures storage costs remain manageable while maintaining a 14-day retention period for security analysis.